NAudioで信号処理 (その9)

NAudioで信号処理 (目次) - Hope is a Dream. Dream is a Hope.

NAudioで信号処理 (その9)

エフェクト処理 Part3 (Echo!!!!)

C# Audio Tutorial 9 - EffectStream Part 3 (Echo!)

やっとエフェクトの処理を実装します!

Echo.cs

先ほど作ったIEffect.csインターフェイスを実装したEcho.csを作ります。

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Threading.Tasks;

namespace Tutorial

{

public class Echo : IEffect

{

public int EchoLength { get; private set; }

public float EchoFactor { get; set; }

private Queue<float> samples;

public Echo(int length = 20000, float factor = 0.5f)

{

this.EchoLength = length;

this.EchoFactor = factor;

this.samples = new Queue<float>();

for (int i = 0; i < length; i++) samples.Enqueue(0f);

}

float IEffect.ApplyEffect(float sample)

{

samples.Enqueue(sample); // add que

return sample + EchoFactor * samples.Dequeue();

}

}

}

EffectStream.cs

using System; using System.Collections.Generic; using NAudio.Wave; namespace Tutorial { public class EffectStream : WaveStream { public WaveStream SourceStream { get; set; } public List<IEffect> Effects { get; set; } public EffectStream(WaveStream stream) { this.SourceStream = stream; this.Effects = new List<IEffect>(); } //... 省略 private int channel = 0; public override int Read(byte[] buffer, int offset, int count) { //*****************************************************************// // ここで信号処理をする //*****************************************************************// Console.WriteLine("DirectSoundOut request {0} bytes", count); int read = this.SourceStream.Read(buffer, offset, count); // 以下信号処理 for (int i = 0; i < read/4; i++) { // float = single = 32bit float sample = BitConverter.ToSingle(buffer, i * 4); // Single=4Byte // エフェクト処理 if (Effects.Count == WaveFormat.Channels) { sample = Effects[channel].ApplyEffect(sample); // ? channel = (channel + 1) % WaveFormat.Channels; // [1ch, 2ch, ...] } // float -> byte列に変換 byte[] bytes = BitConverter.GetBytes(sample); // 4Byteなので,bytes[4] // コピー //bytes.CopyTo(buffer, i * 4); // 遅い buffer[i * 4 + 0] = bytes[0]; buffer[i * 4 + 1] = bytes[1]; buffer[i * 4 + 2] = bytes[2]; buffer[i * 4 + 3] = bytes[3]; } return read; } } }

form1.cs

あとは再生系にエフェクトを指定すると使えます。

// WAV File Open OpenFileDialog open = new OpenFileDialog(); open.Filter = "WAV File (*.wav)|*.wav;"; if (open.ShowDialog() != DialogResult.OK) return; // Audio Chain WaveChannel32 wave = new WaveChannel32(new WaveFileReader(open.FileName)); EffectStream effect = new EffectStream(wave); // エフェクトをかけるためのパイプライン stream = new BlockAlignReductionStream(effect); // Effects for (int i = 0; i < wave.WaveFormat.Channels; i++) { effect.Effects.Add(new Echo(20000, 0.5f)); } // Out output = new DirectSoundOut(200); output.Init(stream); output.Play();

NAudioで信号処理 (その8)

NAudioで信号処理 (目次) - Hope is a Dream. Dream is a Hope.

NAudioで信号処理 (その8)

エフェクトの準備その2

C# Audio Tutorial 8 - EffectStream Part 2

その6)の続きです。

エフェクト用のインターフェイスを準備

using System; using System.Collections.Generic; using System.Linq; using System.Text; using System.Threading.Tasks; namespace Tutorial { public interface IEffect { float ApplyEffect(float sample); } }

using System; using System.Collections.Generic; using NAudio.Wave; namespace Tutorial { public class EffectStream : WaveStream { public WaveStream SourceStream { get; set; } public List<IEffect> Effects { get; set; } // インターフェイスのリスト public EffectStream(WaveStream stream) { this.SourceStream = stream; this.Effects = new List<IEffect>(); // エフェクト初期化 } public override WaveFormat WaveFormat { get { return this.SourceStream.WaveFormat; } } public override long Length { get { return SourceStream.Length; } } public override long Position { get { return this.SourceStream.Position; } set { this.SourceStream.Position = value; } } private int channel = 0; public override int Read(byte[] buffer, int offset, int count) { //*****************************************************************// // ここで信号処理をする //*****************************************************************// Console.WriteLine("DirectSoundOut request {0} bytes", count); int read = this.SourceStream.Read(buffer, offset, count); // 以下信号処理 for (int i = 0; i < read/4; i++) { // float = single = 32bit float sample = BitConverter.ToSingle(buffer, i * 4); // Single=4Byte // エフェクト処理 if (Effects.Count == WaveFormat.Channels) { sample = Effects[channel].ApplyEffect(sample); channel = (channel + 1) % WaveFormat.Channels; } // float -> byte列に変換 byte[] bytes = BitConverter.GetBytes(sample); // 4Byteなので,bytes[4] // コピー //bytes.CopyTo(buffer, i * 4); // 遅い buffer[i * 4 + 0] = bytes[0]; buffer[i * 4 + 1] = bytes[1]; buffer[i * 4 + 2] = bytes[2]; buffer[i * 4 + 3] = bytes[3]; } return read; } } }

NAudioで信号処理 (その7)

NAudioで信号処理 (目次) - Hope is a Dream. Dream is a Hope.

NAudioで信号処理 (その7)

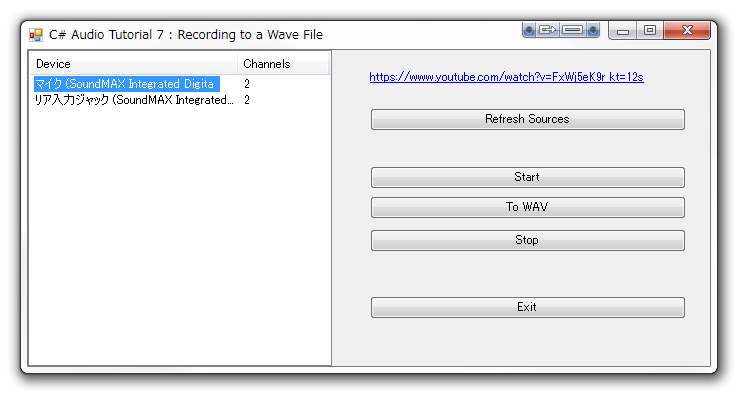

録音デバイスの選択と、再生

C# Audio Tutorial 6 - Audio Loopback using

Tutorial6ではやっとデバイスの選択方法がでてきました。まずは録音デバイスということで入力側のデバイスの一覧を取得し、ListViewに表示します。

Tutorial7では、録音デバイスからのオーディオストリームを、Waveファイルへ書き込みます。

オーディオチェーンは以下のようになります。

WaveIn(rec) -> Callback() -> waveWriter

private void button_ToWav_Click(object sender, EventArgs e)

{

if (listView_Sources.SelectedItems.Count == 0) return;

// オーディオチェーン : WaveIn(rec) -> Callback() -> waveWriter

// 録音先のWavファイル

SaveFileDialog save = new SaveFileDialog();

save.Filter = "Wave File (*.wav)|*.wav;";

if (save.ShowDialog() != DialogResult.OK) return;

// 録音デバイス番号

int deviceNumber = listView_Sources.SelectedItems[0].Index;

// waveIn Select Recording Device

sourceStream = new WaveIn();

sourceStream.DeviceNumber = deviceNumber;

sourceStream.WaveFormat = new WaveFormat(44100, WaveIn.GetCapabilities(deviceNumber).Channels);

// 録音のコールバック

sourceStream.DataAvailable += new EventHandler<WaveInEventArgs>(sourceStream_DataAvailable);

// wave出力

waveWriter = new WaveFileWriter(save.FileName, sourceStream.WaveFormat);

// 録音開始

sourceStream.StartRecording();

}

private void sourceStream_DataAvailable(object sender, WaveInEventArgs e)

{

if (waveWriter == null) return;

// Waveファイルへ書き込み

waveWriter.Write(e.Buffer, 0, e.BytesRecorded);

waveWriter.Flush();

}

using System; using System.Windows.Forms; using NAudio.Wave; using System.Collections.Generic; namespace Tutorial { public partial class Form1 : Form { #region form public Form1() { InitializeComponent(); } /// <summary> /// フォームのクロージング処理 /// Wave関連オブジェクトのDispose処理を担当 /// </summary> private void Form1_FormClosing(object sender, FormClosingEventArgs e) { } #endregion private void button_Refresh_Click(object sender, EventArgs e) { // SetUp WaveIn Devices List<WaveInCapabilities> sources = new List<WaveInCapabilities>(); for (int i = 0; i < WaveIn.DeviceCount; i++) { sources.Add(WaveIn.GetCapabilities(i)); } listView_Sources.Items.Clear(); foreach (var source in sources) { ListViewItem item = new ListViewItem(source.ProductName); item.SubItems.Add(new ListViewItem.ListViewSubItem(item, source.Channels.ToString())); listView_Sources.Items.Add(item); } } #region Member private NAudio.Wave.WaveIn sourceStream = null; private NAudio.Wave.DirectSoundOut waveOut = null; private NAudio.Wave.WaveFileWriter waveWriter = null; #endregion private void button_Start_Click(object sender, EventArgs e) { if (listView_Sources.SelectedItems.Count == 0) return; // オーディオチェーン : WaveIn(rec) -> WaveInProvider -> WaveOut(DirectSoundOut) int deviceNumber = listView_Sources.SelectedItems[0].Index; // waveIn Select Recording Device sourceStream = new NAudio.Wave.WaveIn(); sourceStream.DeviceNumber = deviceNumber; sourceStream.WaveFormat = new NAudio.Wave.WaveFormat(44100, WaveIn.GetCapabilities(deviceNumber).Channels); WaveInProvider waveIn = new NAudio.Wave.WaveInProvider(sourceStream); // ? // waveOut waveOut = new DirectSoundOut(); waveOut.Init(waveIn); sourceStream.StartRecording(); waveOut.Play(); } private void button_ToWav_Click(object sender, EventArgs e) { if (listView_Sources.SelectedItems.Count == 0) return; // オーディオチェーン : WaveIn(rec) -> Callback() -> waveWriter // 録音先のWavファイル SaveFileDialog save = new SaveFileDialog(); save.Filter = "Wave File (*.wav)|*.wav;"; if (save.ShowDialog() != DialogResult.OK) return; // 録音デバイス番号 int deviceNumber = listView_Sources.SelectedItems[0].Index; // waveIn Select Recording Device sourceStream = new WaveIn(); sourceStream.DeviceNumber = deviceNumber; sourceStream.WaveFormat = new WaveFormat(44100, WaveIn.GetCapabilities(deviceNumber).Channels); // 録音のコールバックkな数 sourceStream.DataAvailable += new EventHandler<WaveInEventArgs>(sourceStream_DataAvailable); // wave出力 waveWriter = new WaveFileWriter(save.FileName, sourceStream.WaveFormat); // 録音開始 sourceStream.StartRecording(); } private void sourceStream_DataAvailable(object sender, WaveInEventArgs e) { if (waveWriter == null) return; waveWriter.Write(e.Buffer, 0, e.BytesRecorded); waveWriter.Flush(); } private void button_Stop_Click(object sender, EventArgs e) { waveOut?.Stop(); waveOut?.Dispose(); waveOut = null; sourceStream?.StopRecording(); sourceStream?.Dispose(); sourceStream = null; waveWriter?.Dispose(); waveWriter = null; } private void button_Exit_Click(object sender, EventArgs e) { button_Stop_Click(sender, e); this.Close(); } } }